If you’re creating an HTML5 mobile web application, this is the minimum you need to give your users what Google calls a “homescreen launch experience” on an iOS or Android mobile device:

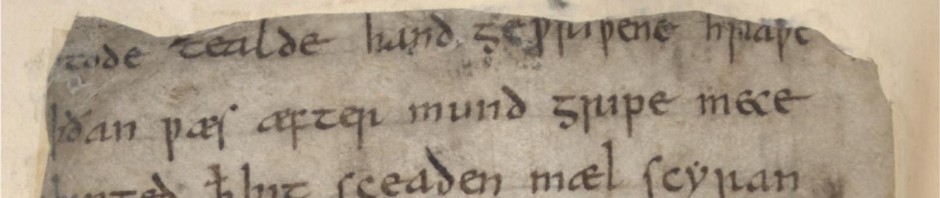

<!doctype html>

<html>

<head>

<title>Awesome app</title>

<meta name="viewport" content="width=device-width">

<meta name="mobile-web-app-capable" content="yes">

<link rel="shortcut icon" sizes="196x196" href="/icon.png">

</head>

<body></body>

</html>

The “shortcut icon” link element is pretty straight-forward: it simply provides a pretty, app-style icon to save to the iOS homescreen or Android launcher. The “mobile-web-app-capable” meta element, on the other hand, strikes right to the core of what you’re doing: are you creating a web app, or a web site?

I’m a web app

Here’s what Chrome for Android gives me if I save a web app to the homescreen (launcher), then tap on that icon:

- The app gets its own entry in the task switcher.

- All the browser chrome is hidden, and there’s no way to add a new tab, create a bookmark, change options, etc.

- If the user chooses a link outside my domain, Chrome will animate a normal browser launching, to warn me that I’m outside the app.

I’m a web site

If I don’t include “mobile-web-app-capable,” then Chrome for Android will still let me create an icon on the homescreen, but when I tap on it, I’ll see my regular browser launch, with all its chrome, tabs, bookmarking, etc.

So which am I?

A web app is a loner. It has several advantages over a native mobile app (cross-OS/-device compatibility, avoiding app-store bottlenecks and censorship, more-flexible security model, linkable, etc.) and several disadvantages (can’t handle an Android Intent, weaker notification support), but its plan is clearly to stand on its own, like a native mobile app. A web app is designed around actions: with a clever combination of HTML5, CSS, JavaScript, and newer stuff like AppCache and LocalStore, you could create (e.g.) a Twitter web app that was very similar to the Twitter native app (important caveats). The web app designer doesn’t want her app open in one browser tab with a different site open in another, while the user switches back and forth. Online video games, interactive edit/design tools, and anything that is supposed to feel a bit like a MacOS or Windows program installed from a CD-ROM 15 years ago is likely an app.

A web site is a social creature. It’s orthogonal to a native mobile app, because it inhabits a different universe. A web site is RESTful, designed around pages (more generally, “resources”), most of which contain either information a user wants to see with links for navigating to related information, or a means to create/change/delete that information (there’s also typically a search feature). Unlike web apps, web sites embrace the browser chrome, which provides an important part of the user experience: because the browser allows a user to search for text within a page or bookmark any page, the web site itself doesn’t have to provide that support; similarly, the browser lets a user open multiple views into the same site using different tabs by default (so that a user can view two pages at the same time), while an app treated as an app has to have that support built in. Wikipedia, blogs, tutorials, newspapers and magazines, most online discussion forums, and my own OurAirports are all examples of things that are most naturally web sites.

Last thoughts

I’m starting the process of making OurAirports responsive and otherwise mobile-friendly, and one of the first things I tried was adding “mobile-web-app-capable” and then saving the icon to my Android desktop. It worked exactly as advertised — it was cool having the site open up as if it were a phone app, appear in the task switcher, etc., but it took me only minutes to realise that I’d crippled it: a user launching OurAirports that way could no longer bookmark a specific airport page to come back to it later; could no longer open two airports in different tabs; and could no longer search for text in a long comment stream. I removed it and let the browser chrome come back, because really, that browser chrome is a lot of what makes a web site useful.

So when you’re designing your next web thingy, ask yourself a few questions:

- Is it valuable for users to be able to search for text within a page, bookmark pages, and open multiple tabs (site)?

- Is it mainly about manipulating an external resource, such as a picture or diagram (app)?

- Does it have lots of pages with relatively stable URLs (site)?

- Can it potentially provide a reasonably-complete offline experience using only a small number of files containing markup, code, and data (app)?

- Is it designed as part of a bigger web, linking out and receiving inbound links (site)?

- Would browser chrome distract rather than help the user (app)?

- Is it something that Google should index and make searchable (site)?

Over the past few years, I think, too many people have been taking things that are more-naturally sites (e.g. newspapers) and trying to force them to behave like apps by forcing in AJAX, hashbang URL syntax, etc.: the result is typically impressive and flashy, but a crappy user experience. On the other hand, the greatest advance in the Web over the past ~10 years has been its growing ability to support activities like creating spreadsheets or editing photos that really don’t fit the REST/web-site model. So by all means, if you have an app, let it be an app, but don’t try to force everything into app world; REST is still the web’s heartbeat.